Notes from Cortana Womens Health Risk #MachineLearning Competition-I

October 21, 2016 Leave a comment

Another Cortana Machine Learning is now over – Womens Health Risk Assessment -, be sure to check the Winners, and most importantly their submissions and overall approaches to the problem. (update 26/10- Power BI companion with final results uploaded & online here)

Must confess I hadn’t so much fun in a while working with data, stats, code & dataviz, been an amazing learning journey, really highly effective on consolidating ~more than a year of almost exclusive deep dive/personal quest in data,stats, R, machine learning (and yes, some math involved…).

Have to say almost as equally frustrated missing the top 10….again, 6th on public leaderboard, 13th on final rankings. Far from one of my goals, actually winning the competition. And trust me, I gave it all, to the last mile.

(ah… and yes, prizes would be great help on research funds ![]() )

)

I had got a very lucky 34th place in previous brain signals competition, but that one was completely out of reach, due to the knowledge needed on signal processing/ECoG, gave up midway really)

So, I decided to take this one very seriously as I knew it would be the only way to maximize learning, both on competition data, ml process and everything data/stats/ml related. I would only stop when there were abs nothing more I could do, and so it was.

Other goal, ensure that DevScope ml minds could maximize learning also, have some fun, hopefully get into winners, or at least top 10. Very happy to see that we’re all top 25. ![]() Huge congrats Bruno & Fábio. (still couldn’t get most brilliant mind at DevScope to enter this one though, hope better luck next time….

Huge congrats Bruno & Fábio. (still couldn’t get most brilliant mind at DevScope to enter this one though, hope better luck next time…. ![]() )

)

I put so many hours… days, weeks on this one, if there hadn’t been so much learning involved (and personal enjoyment) I would classify this as my most unproductive period… ever.

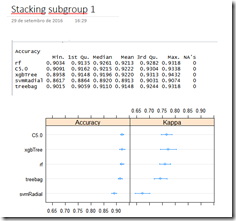

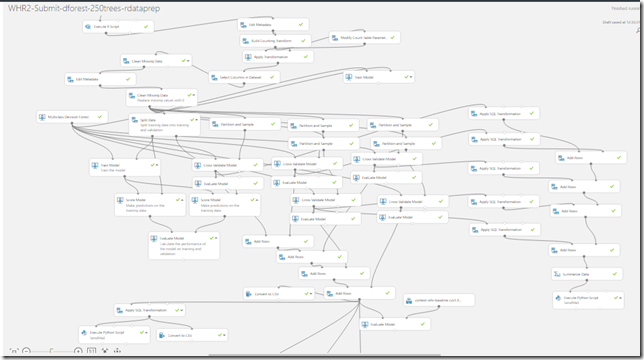

So, I’ll publish as much as I possibly can in upcoming blog posts, what worked out, what didn’t, code, tips, insights that still intrigue me, from Azure ML, to Power BI, R, caret, xgboost, random forests, ensembles, learning with counts, even some python. Also noted as much as I can on code files, OneNote was huge help, it’s great now to look back and have all this.

Other goal was to pursue both native Azure ML & custom R tracks to compete, but preferably win with Azure ML native modules (as I knew few top competitors would be all R/python). Still believe that using AzureML modules, no code required, would be enough for top 10-20 rank. But also believe, with current azureml capabilities, if you want to win, you’re better off using native R/python from the start.

That’s relevant, also relevant that top 3 winners submissions use base R, xgboost, all features, no AzureML native modules (azureml teams, some hints here…)

Still, it isn’t over yet, as now I can look back and try to understand what could I have done differently and learn from top submissions. How much randomness is involved in a competition that was so close like this one? bitten by excessive variance on my submissions? multiple comparisons? Can an ensemble of the best techniques and models from top submissions improve the score we have to date? what more insights can we get from competition data? submissions data?

Awesome journey, so much still to learn…. hope to share as much, stay tuned!

Rui

(just the tip of the iceberg really…)

![clip_image001[6] clip_image001[6]](https://rquintino.files.wordpress.com/2016/10/clip_image0016_thumb1.png?w=644&h=286)

![clip_image001[10] clip_image001[10]](https://rquintino.files.wordpress.com/2016/10/clip_image00110_thumb.png?w=160&h=217)

![clip_image001[14] clip_image001[14]](https://rquintino.files.wordpress.com/2016/10/clip_image00114_thumb.png?w=289&h=197)

![clip_image001[12] clip_image001[12]](https://rquintino.files.wordpress.com/2016/10/clip_image00112_thumb.png?w=286&h=206)

![clip_image001[16] clip_image001[16]](https://rquintino.files.wordpress.com/2016/10/clip_image00116_thumb1.png?w=644&h=346)

You can be running your first submission in minutes using the online tutorial. Then it’s up to you.

You can be running your first submission in minutes using the online tutorial. Then it’s up to you.

![DAX Formulas for PowerPivot: A Simple Guide to the Excel Revolution [Kindle Edition] DAX Formulas for PowerPivot: A Simple Guide to the Excel Revolution [Kindle Edition]](http://ws-na.amazon-adsystem.com/widgets/q?_encoding=UTF8&ASIN=B00A6J9SM6&Format=_SL160_&ID=AsinImage&MarketPlace=US&ServiceVersion=20070822&WS=1&tag=rqui-20)

Addin for Excel 2007, 2010, 2013

Addin for Excel 2007, 2010, 2013